Gmat essay grader12/17/2022

The stakes are too high to hope for magic to happen on the D-Day and expect a 5+ score in AWA by default.

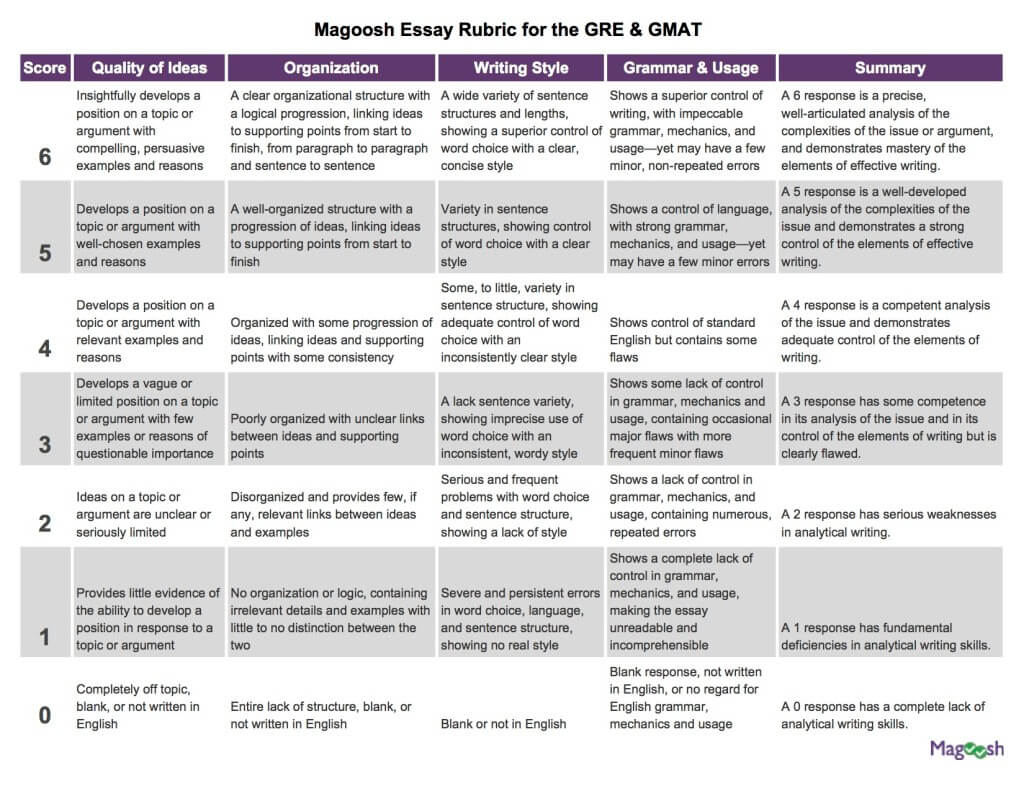

#GMAT ESSAY GRADER HOW TO#So in these TIMES of self education, the candidates are thus on their own to figure out how to score well in this important section of the GMAT format.Īt awaRatrTM we realize that there is a definite logic to achieving success in AWA for GMAT. The rarest of rare simulators/e-raters of some quality that do exist, are a major burden on the pocket. Unlike 100s of mocks of which can be taken to improvise and excel in Verbal & Quantitative ability sections, there is not much on offer for excelling at AWA even if the course formats of the top GMAT prep firms( be it grockit, e-GMAT, gmatclub or the best of GMAT coaching institutions) are considered. The final score (on a scale of 6) is a function of the evaluation by a human grader and a computerized evaluation.įor most of the prospective GMAT candidates the Analytical Writing Assessment remains an enigma. The candidates are asked to analyze the reasoning behind a given argument and write a critique of that argument. The AWA consists of one 30-minute essay: Analysis of an Argument. The Analytical Writing Assessment (AWA) is designed to measure your ability to think critically and to communicate your ideas. #GMAT ESSAY GRADER FOR FREE#Grade AWA essay attempts online using an automated evaluator for free #GMAT ESSAY GRADER SOFTWARE#The software is continuosly learning and improving itself. The differentiating attribute of this software is its ability to enable candidates to write GMAT essays (AWA) and simulate the grading system, providing them an insight into the areas of development. We encourage users to use it, play with it, learn from it and make it learn! Evaluation awaRatr is a neural network implementation which has been fed a large number GMAT argument essay attempts collected from various sources on the internet which were evaluated on close to thirty different parameters inclusive of but not limited to language, structure, cohesion, coherence. awaRatr is inchoate and needs a lot of learning (in fact it learns from each submitted attempt ), more on that in the How it works! section). The fact that the AWA assignment is essentially designed to test the core skills of argument analysis as a coherent expression in English Language, is the main functional parameter of this software grades the essays. awaRatr is designed specifically to comprehensively evaluate the text-piece submitted as a GMAT essay attempt. Q Science > QA Mathematics > QA75 Electronic computers.AwaRatr is a free essay grading software, aiming to help GMAT takers understand, evaluate and improve on their attempts at writing argument essays for GMAT, targeting excellence in the AWA (Analysis of an argument) section of the GMAT. Learning, MUET, natural language processing. Scoring, intelligent system in education, machine From our prediction model, we observed that the model yielded better accuracy results based on the selected high-correlated essay features, followed by the language features.

In our result, we found that the language featured such as vocabulary count and advanced part of speech were highly correlated with the essay grades, and the language features showed a greater influence on essay grades than the semantic features. Furthermore, we constructed an essay scoring model to predict the essay grades. We analyzed the correlation of the proposed language and semantic features with the essay grade using the Pearson Correlation Coefficient. We proposed the essay scoring rubric based on its language and semantic features. This paper intends to formulate a Malaysia-based AES, namely Intelligent Essay Grader (IEG), for the Malaysian English test environment by using our collection of two Malaysian University English Test (MUET) essay dataset. In Malaysia, the research and development of AES are scarce. The AES products tend to score essays based on the scoring rubrics of a particular English text context (e.g., TOEFL, GMAT) by employing their proprietary scoring algorithm that is not accessible by the users. However, most of the products and research works are not related to the Malaysian English test context. There are several well-known commercial AES adopted by western countries, as well as many research works conducted in investigating automated essay scoring. Automated Essay Scoring (AES) refers to the Artificial Intelligence (AI) application with the “intelligence” in assessing and scoring essays.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed